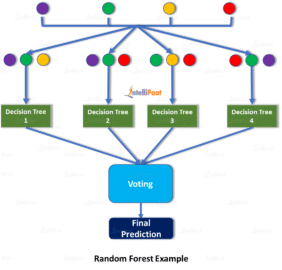

Random Forest Regression Model: We will use the sklearn module for training our random forest regression model, specifically the RandomForestRegressor function. Predicted values are obtained by dropping test data down the trained forest (forest calculated using training data). Spatial predictors are surrogates of variables driving the spatial structure of a response variable. For regression problems, a random forest prediction is an average of the predictions produced by the trees in the forest. I conducted a fair amount of EDA but won’t include all of the steps for purposes of keeping this article more about the actual random forest model. Random Forest is a supervised machine learning algorithm that is used in the classification and regression kinds of problems. Mutate(predicted = case_when(complete.cases(.) ~ predict(my_forest, newdata =. Automatic generation and selection of spatial predictors for spatial regression with Random Forest. New_data % rowid_to_column() # add column with rownumberįilter(!complete.cases(.)) # save those rows with NA in separate ameįilter(complete.cases(.)) # keep only those rows with no NA Fitting a model and making predictions forest.fit(Xtrain,ytrain) predictions forest.predict(Xtest) Evaluating the Performance of a Random Forest in Scikit-Learn. Here is a step-by-step method explaining what we are doing. If this is a classification problem, let me know and I can alter the code. Replace these with the names of your objects. Increasing the number of trees increases the precision of the outcome. It predicts by taking the average or mean of the output from various trees. The assumptions are that your new data is in a ame called new_data and your trained random forest model is called my_forest. The (random forest) algorithm establishes the outcome based on the predictions of the decision trees. I can even maintain the original row order. Rather than impute the missing values, you want the predicted value to be NA for those rows with missing values. Its ease of use and flexibility have fueled its adoption, as it handles both classification and regression problems. You want to take a trained model and make predictions on new data which may have missing values. Random forest is a commonly-used machine learning algorithm trademarked by Leo Breiman and Adele Cutler, which combines the output of multiple decision trees to reach a single result. The scenario mentioned in the top-voted answer will only occur when every leaf of all trees have data points belonging to only one class in them.I think I understand what you want. right leaf in 2nd child split has 75% yellow so prediction probability of class yellow will be 75%.

Look at this image of a single decision tree to understand what it means to have different classes within the leaf. A single tree calculates the probability by looking at the distribution of different classes within the leaf. In other words, since Random Forest is a collection of decision trees, it predicts the probability of a new sample by averaging over its trees. Example of trained Linear Regression and. The class probability of a single tree is the fraction of samples of the same class in a leaf. A prediction from the Random Forest Regressor is an average of the predictions produced by the trees in the forest. I am afraid the top-voted answer isn't correct (at least for the latest sklearn implementation).Īccording to the docs, the probability of prediction is computed as the mean predicted class probabilities of the trees in the forest. Is there any way to get the next 5 digit for example? The first issue is that the results represent the probabilities of the labels without being affected by the size of my data? The second issue is that the results show only one digit which is not very specific in some cases where the 0.701 probability is very different from 0.708. Because prediction time increases with the number of predictors in random forests, a good practice is to create. However, I have two main issues with the results about which I am not confident. Grow Random Forest Using Reduced Predictor Set. Where the second column is for class: Spam. Predictions = classifier.predict_proba(Xtest) Instead of having Spam/Not Spam as labels of emails, I wish to have only for example: 0.78 probability a given email is Spam.įor such purpose, I'm using predict_proba() with RandomForestClassifier as following: clf = RandomForestClassifier(n_estimators=10, max_depth=None, Sometimes I need to have the probabilities of labels/classes instead of the labels/classes themselves.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed